There is an inherent problem with large language models (LLMs) and the DoD’s classification (SECRET, TOP SECRET, TOP SECRET/SCI) hierarchy1. It deals with how LLMs learn patterns and relationships. They can deduce or infer new information within or across multiple data sources. Critically, this information may be unknown to humans until LLMs discover it.

Let me provide an exceedingly simple example of how this will be problematic. Here is my interaction with ChatGPT a few weeks back. I developed a description of two fictitious creatures, Omigars and Drevils. See Figure 1 (the description of an Omigar) and Figure 2 (the description of a Drevil) following. I asked ChatGPT to ingest and add those documents to its knowledgeable.

Figure 1. The article about Omigars

Figure 2. The article about Drevils

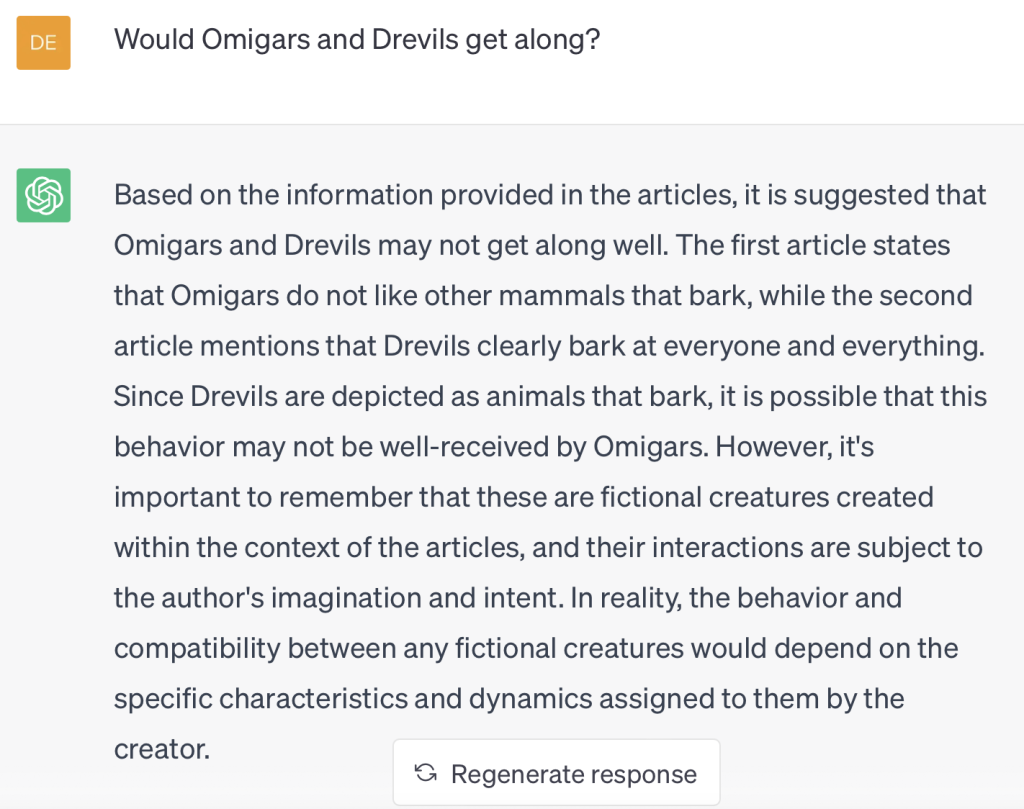

Next, I asked ChatGPT a series of questions about the documents. Figure 3 shows a question that requires pieces from both documents. Those familiar with DoD classification guidelines see how this is headed to a likely problem.

Figure 3. An example of new information

“Since Drevils are depicted as animals that bark, it is possible that this behavior may not be well-received by Omigars.“

Neither document itself states this potentially correct fact above. The LLM deduced this information. So, can the new information be guaranteed to be at the same classification level as the original information? It likely cannot. Here is why.

Virtually Infinite Answers

ChatGPT replied like this when asked if it could list out every possible answer to every possible question it could be asked2.

Figure 4. Can ChatGPT list out all its potential answers.

The DoD, however, would need a mechanism to iterate every possible, conceivable answer an LLM could offer. Each answer would need to be examined to determine its classification level. Many problems abound from this fact. Here are two:

- Human Intel Analyst Classification Proofers – There are not enough of these to verify each answer an LLM generates is at the appropriate classification level.

- LLM Classification Proofers – An intriguing idea. The SECRET level LLM would be connected with an LLM at a higher level. At that point, the LLM at the SECRET level could communicate with the LLM at the TOP SECRET level to proof answers. Note, those familiar with DoD systems realize what a technical pain this would be to implement. Regardless, what if the TOP SECRET LLM indicates for a provided input: “Yes – this answer contains information at the TOP SECRET classification.” The SECRET level model itself would be TOP SECRET. Immediately it would need to be taken off line and machine sanitization would begin.

Hasn’t This Always Been a Problem?

Yes, of course. Maintainers of DoD data sources go through great pains to ensure the data held at a classification level is appropriate for that level. This includes monitoring information that would allow for deducing or inferring classified information.

Also, there have been inference systems that allowed new information to be deduced or inferred from an existing DoD data set. However, the inferencing systems have a set of finite rules that could be understood by a humans (because they wrote them!) The lists of inferencing rules themselves are not exceedingly large. It is realistic to guarantee that the rule set for existing inferencing systems would not generate problematic classified content.

The new issue is the sheer number of models an LLM encapsulates. We speak of an LLM as a model. It can also be thought of as an aggregate or amalgamation of n numbers of models present in the training set. If the data set includes 1.) text reports about troop movements in an area for a date range, 2.) a sensor outputs for the same area and date range, and 3.) technical documents that provide details about the sensor itself, the LLM will learn the model about how those three data sets interact and are connected. It will be able to deduce or infer new facts a human has never considered (unless there exists a human who thoroughly understands all three data sets).

Conclusion

There will be many patterns, relationships, and linguistic structures present across a heterogenous set of data sources. The LLM will learn and generalize more of these patterns and relationships than a human could ever hope to. The problem for the DoD is, will some of these patterns and relationships be at a higher classification than the original data sources themselves? There is simply no way to guarantee they will not be.

Footnotes:

[1] For those interested – here is a definitive guide on how DoD classification works: https://www.dodig.mil/Portals/48/Documents/Policy/520001_vol2.pdf?ver=2017-04-25-160926-223

[2] There is a concept of drift in LLM model responses. This was explained to me by my colleague David Sheets. A drift value could be set that would provide the exact same reply each time for a given question. With an infinite number of questions, the problem would persist. For this interested, Dave has provided a series of YouTube posts about LLMs here: https://blogs.canisius.edu/generativeai/understanding-generative-ai-history-and-context/